Digital and analog. We often think of them, mistakenly, as equivalent things in different domains. But that’s a misunderstanding, and we often use the terms incorrectly.

For instance, run a mic through a preamp and get a signal, we know that’s an analog signal. And if we run it through an ADC and store it in a computer, it’s digital. But if we encode that signal as up and down voltage fluctuations at a high rate of speed, and run it over a wire, such as the AES-EBU standard, arguments break out over whether the signal is digital or analog.

The arguments stem from the terms being misunderstood, with better terms available.

Analog

The definition of analog is, “Something that bears an analogy to something else; something that is comparable.” The electrical signal from a mic is an analog of the compression and rarefaction in the air from the movement of air through your vocal cords. We can amplify it and it pushes a speaker that moves air and then our eardrums.

Digital

Literally, digits are your fingers, for counting. Something represented by numbers is digital. But numbers are an abstractions. Long ago, most of the world standardized on symbols for a 10-based (for our ten fingers) system of number representation. We can say, “twenty-six, and write down “26”. Or “0x001A”. In binary computer memory, we can store the equivalent bits as approximate voltages, or save to a hard drive in a group of magnetic states. And transfer them over a serial connection as fluctuations of voltage. In a way, these fluctuations of voltage and magnetic states are analogs of the digital values we want to represent.

So, fundamentally analog signals are analogs, and digital signals are analogs. No wonder some argue that sending a digital signal over a wire is an analog signal. It is truly both. Perhaps we might not be using the best terms to describe what comes out of a mic and what comes out of a computer.

Continuous

Continuous implies no separation of values. A mic signal simply flows and fluctuates over time. It’s not a series of stable states, it’s a continuum.

Discrete

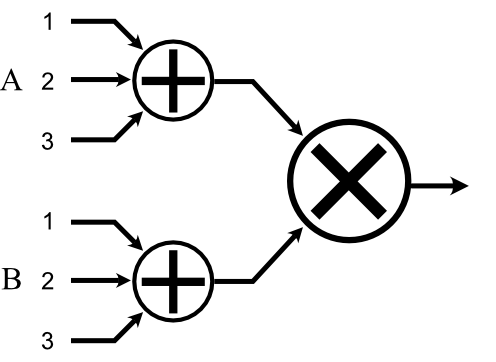

Digital audio data are discrete—individual samples updated at a fixed rate. But we can also have sampled analog as well, and it is also discrete. We can store each sample in a purely analog form—without intermediate conversion to a digit—in a bucket brigade device (BBD), essentially holding each analog voltage in a capacitor.

“Sampled” implies discrete, but in particular a series of discrete values over time (we’ll only discuss uniform sampling, where the timing is a fixed rate).

Digital is always discrete or sampled. A number is a symbol, and while we can have a series of symbols that change often, they can never be continuous. The analog lowpass filter in a DAC is what ultimately converts the discrete samples to a continuous signal.

Analog can be continuous or discrete (inside analog delay stomp boxes, for instance), though we almost always use analog as a synonym for continuous. But making the mistake of thinking they are the same thing is where more complex discussions go wrong.

So, back to the argument of whether a signal stored on a hard drive, or SSD, or in RAM are truly digital, yes they are. And they are analog, because numbers are ideas and we can’t store ideas, only analogs of ideas. It gets more confusing when transferring a digital signal over a wire, because we can’t compartmentalize electrical flow, it’s always continuous. (Strictly speaking, electron flow itself is discrete, but we’re not operating at that level—it would be horrendously intolerant of noise anyway to deal with discrete flow—which is why I say “electrical” and not “electrons”.) It’s a discrete digital signal, transported over a continuous flow of analog voltages.

But I wouldn’t call that an analog signal, it’s still a digital signal. If you amplify it and play it back, that’s when it will hit home that it’s not an analog of the music signal, it’s an analog of the states of the digital signal.