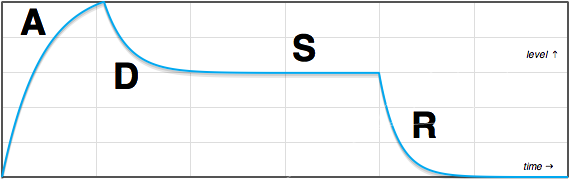

Certain aspects of the ADSR are up for interpretation. Exactly how the ADSR re-triggers when not starting from idle, for instance. Also, we can decide whether we want constant rate, or constant time control of the segments. The attack segment is fixed in height, so starting from idle (0.0 level) to peak (we’ll use 1.0 in our implementation—feel free to multiply the output to any level you want), there is no difference between constant rate and constant time. But for the decay and release segments, the distance traveled is dependent on the sustain level. I choose constant rate—this means that the release setting is for a time from the maximum envelope value (1.0) to 0.0; if sustain is set mid-way at 0.5, the release will take less time to complete than if it were at 1.0, but the rate of fall will be the same.

Time

There is one problem about how much time it takes for an exponential move: In theory, it takes forever.

That’s OK for decay and release, since it will become so tiny after a point that there is no difference between the near-zero output and zero. For attack, however, it’s a big issue. We don’t move to the decay state until the attack state hits the maximum at 1.0. If it never hits, we’re in trouble. No problem—we just aim a little higher than 1.0, and when it crosses 1.0 we move to the decay state.

In practice, floating point numbers have limitations and it won’t take forever (depending on the exact math used). But we’d spend way too much time making unnoticeably tiny moves as we get close to 1.0; certainly, we want to clip the exponential attack. Hardware envelope generators do the same thing, hitting a trigger level to switch to the decay state.

But there’s an aesthetic reason to clip the attack exponential as well. There are a number of reasons that the shape of “decaying” upwards towards a maximum value is the wrong shape for a volume envelope. It’s likely that the popular attack curve for hardware generators was a compromise between an acceptable shape and convenience (it’s easy to generate).

Math

While the math of the curve itself is simple due to the nature of generating a exponential curve iteratively (we just feed back a portion), the math of relating rates to coefficients is a bit more work. I decided that instead of arbitrary (“1-10”) control settings for the rates, I wanted to set the segments by time (in samples) for the segment to complete. So, a setting of 100 would make the envelope attack from 0.0 to 1.0 in 100 samples. A setting of 100 for release would hit 0.0 in 100 samples if sustain were set at maximum, 1.0, but sooner if it were set lower—constant rate, not time.

Again, we need only clip the attack segment, since we wouldn’t notice the difference between a full exponential and a slightly clipped one for the decay and release segments. However, putting a cap on those segments has some advantages. One is that we use a little less processing for envelopes in the idle state. But there’s another trick I chose to implement, after experimenting a bit and giving thought to exponential versus linear segments. Read on.

Shape control

One way to handle terminating exponential segments would be to set a threshold that is deemed “close enough”. For instance, as the attack segment gets within, say, 0.001 of 1.0, we simply set the output to 1.0 and move to the decay state. But if we take a slightly different approach, shooting for a target of 1.001 instead, and terminating when it hits or crosses 1.0, then we won’t have a noticeable step if we decide to make that “overshoot” value much larger.

Why would we want to make it much larger? That would wreck our nice exponential. In fact, if we make it too large, the moves would be virtually…linear! So, we could use a small number to stay near-exponential, or move to larger numbers to move towards linear segments, giving us a very versatile ADSR with curve control very simply.

More math

Usually, the rate of an exponential is based on the time it takes to decay to half the current value. But since we’re truncating our exponentials, I chose to define our rate controls as the time it takes to complete our attack from 0.0 to 1.0, or the decay or release to move from 1.0 to 0.0 (which happens for decay when the sustain level is set to 0.0, or release when sustain is 1.0).

Exponential calculations can be relatively CPU intensive. But, fortunately, envelope generators produce their output iteratively. And an iterative implementation of the exponential calculation is a trivial computation. We can calculate the first step of the exponential, whenever the appropriate rate setting is changed, then use it as the multiplier for subsequent iterations (remember the one-pole filter? Simple multiplies and additions).

rate = exp(-log((1 + targetRatio) / targetRatio) / time);

where targetRatio is our “overshoot” value, the constant 1 is because we are moving a distance of 1 (0.0 to 1.0, or 1.0 to 0.0), and time is the number of samples for the move. Typically, we’ll pick a targetRatio that we like (a larger number to approximate linear, or a small fraction to approach exponential); time comes from our rate knob, perhaps via a lookup table so that we can span from 1 sample to five or ten seconds worth of samples in a reasonable manner.

I chose to refer to the overshoot adjustment as a “target ratio” because it’s related to the size of the move, and it’s helpful to think of it in terms of dB, especially related to loudness. For the release to decay to -60 dB, for instance, we use a value of 0.001; -80 dB is 0.0001. Using smaller values will have a significant effect on how “wide” the top of the attack segment is, at a given attack rate setting. To convert from dB to the ratio value, use 10 raised to the power of your dB value divided by 20; in C, “pow(10, val / 20.0)”.

Another pleasant byproduct of using this “target ratio” (overshoot) for all curves is that we don’t need to guard against output decaying into denormals and ruining performance.

Next: Code

Up next is source code. As usual, I try to keep the code simple yet flexible. You may want to add features to auto-retrigger the envelope for repeating patterns, add delay and hold features, or add additional segments—that’s where you distinguish yourself. And this ADSR has features lacking in many implementations already—adjustable curves, and precise specification of attack time in samples, for instance.

How did you find the equation for rate = exp(-log((1 + targetRatio) / targetRatio) / time);

I have been trying to figure out how to derive it from the exponential equation y(x) = a * b^x + c unsuccessfully. Googling isn’t providing much help and neither is stackoverflow. I know it has been a while but any help you can give would be appreciated.

Basically, I just thought about straightening out the exponential relationship (of the ratio of the total target distance to the part that overshoots) with log, so I could divide it up evenly by time (otherwise the increments have an exponential relationship), then putting it back to exponential. (The problem similar to calculating where to draw your pixels on a log frequency plot.) It simplifies to this, which may or may not be clearer (but ended up being slower unless I dropped to single precision): 1/(pow(1/targetRatio + 1, 1 / rate));

Nice article if found it useful when looking for the right curves for attack/decay/release for the synthesizer i’m making. But i found simpler formulas :

attack : currentEnvelopeLevel = (maxEnvelopeLevel – currentEnvelopeLevel) * attackRate

it’s maybe not a true logarithmic curve but it looks (and sounds) close. You need to set maxEnvelopeLevel higher than your real max env level to avoid the problem explained in the “Time” chapter (envelope never reaching max). AttackRate is a very small number (usually between 1 and 0.000001)

decay : currentEnvelopeLevel = currentEnvelopeLevel * decayRate

if(currentEnvelopeLevel < sustainLevel) currentEnvelopeLevel = sustainLevel;

the multiplication of the current env level by a number a little smaller than 1(like 0.999) produces an inverse log looking curve

I think you mean “currentEnvelopeLevel += (maxEnvelopeLevel – currentEnvelopeLevel) * attackRate” (note the “+=”)? In any case, it’s not simpler, it’s essentially the same. I rearranged the execution for efficiency, but my code has “output = attackBase + output * attackCoef;” for the iteration.

Hey Nigel

Thanks for the awesome article. I’m noticing that when generating the envelope using linear or log curves the time length in samples is reduced vs a straightlinear execution. Is this expected behaviour? I’ve trolled my code a few times now and haven’t been able to spot my error.

Hi James,

I need to know more to understand what you are seeing. As I noted in the first paragraph of the article, the times are for a move from one extreme to the other, 0.0-1.0 for attack, 1.0-0.0 for decay and release. For instance, if I’d made the decay time to be the decay segment time (1.0 to the sustain level), the decay rate would need recalculating any time the sustain level is adjusted (and how do you want to handle that when you’re already in the decay? It would be very goofy to have the rate speeding up and slowing down as you twiddle sustain in a long decay, for instance; also, it would be very easy to end up with a negative time to completion). And that doesn’t make sense anyway in an analog emulation—capacitor discharge rates don’t change when you change sustain.

So, the attack stage is pretty straight forward—the attack should always end at the same time, no matter what the shape is. For decay and release, you’d need to check them with sustain at 0.0, and sustain at 1.0, respectively. You should get the same times for any shape. In between is another story: Because the rate is constant from 1.0-0.0, if you set sustain at 0.5, say, you’re truncating the movement to 0.0, and an exponential decay is going to do the fastest movement at the beginning, so it will land on the sustain level quicker than a near-linear decay at the same setting. I suspect this is what you’re seeing, assuming everything is working right.

Thanks for the quick response Nigel. I’ll have a look. I am recalculating the coefficients as changes are made so Im thinking this should result in like for like times wrt linear vs nonlinear. Thanks again for the insites.

James

Hey Nigel. It turns out you are correct. All of my attacks are exactly right it’s the decays and releases which are doing what you described. In my user interface I present the envelope rates as time. Will users be annoyed at this truncation given the length is not accurate. Keen to hear your perspective.

James

Well, James, in the normal behavior of classic ADSRs, you’re setting a time constant—the rate of discharge for a capacitor—not time. I think you’ll find behavior to be counterintuitive if you try to retain a decay time to a sustain level, and release time from there to zero. Even if you don’t allow the values to change during a cycle as you adjust sustain, it would still be weird having an envelope move very slowly off the peak when sustain is set high, and quickly when set low (for near-exponential, which is typical for classic ADSRs).

I took care to make the code sample-accurate for the full sweeps, but the reason was to make it easy for the developer to pick a range, not so much for a user twiddling a knob—which is less important. That is, I have no idea off the top of my head how many seconds my old Aries ADSRs take to do a full attack or decay, nor that of the old modular Moogs I used decades ago in the lab at USC, nor for a Minimoog. The known range 0-10 and you set the rate by sound. But if I’m designing a synth, it would help if I could decide that I want the attack to range to 8 seconds max, and be able to get that by setting max to samplerate * 8, for instance.

That’s a long way of saying, “that’s why classic synth ADSRs have controls that range 0-10, instead of being noted in absolute time.” Also, consider that you probably don’t want the controls to be linear either—it’s usually helpful to have fine control at the beginning of the knob’s rotation, and bigger steps as you turn. The difference between a 0 ms attack and 100 ms is huge, but the difference between 4 sec and 4.1 sec is not noticeable in most cases.

Thanks Nigel,

I between our emails I reread your article. Typical of my arrogance I glossed over the beginning where you explained the design choice of constant rate vs constant time. Fwiw my knobs do have a nice exp curve to them. It took me a good while to understand this subtlety. Thanks ever so much for your article, insites and fast responses. I’ve really enjoyed the discussion.

James

Awesome, James—good luck with your project.

Just discovered this site, very cool!

> guard against output decaying into denormals and ruining performance.

Yes! Can’t be emphasized enough… I found this out the hard way on a project I worked on; I was playing a midi file through my synth software, and measuring performance. Everything looked great while the song was playing, but then when the last note ended, CPU use gradually increased >10x! It was because my ADSR release code was just output = output*releaseFactor, and once output got below the lowest normalized float, performance went down the tubes… (Google “float denormalized performance”).

Maybe you cover this elsewhere, but definitely something to be kept in mind. Caused me quite a bit of head-scratching/banging.

Hi Nigel, thank you for the information. I’m trying to change the curve style, because all these are like to an (x^n) function. I’m trying to achieve curves similar to x^(1/n), but I have no idea about how to change the e^-(f(r,t)) formula. Please, could you help me?

Hi Nigel, I went through the article couple of times but I still cannot figure out how the formula rate = exp(-log((1 + targetRatio) / targetRatio) / time); is obtained. Can you give me the key to solve this riddle?:)

Ugh, you’re going to make me think this out all over again (maybe should have kept notes! um, maybe did somewhere, but I’ll give it a moment of thought anyway…). Well, the basic idea is to take ratio of the total distance to the asymptote (which will take forever to reach) to the place we end. If we start at 1 + targetRatio, we travel 1 to end at targetRatio. We want to divide that up into the amount of time (in iterations—if we’re processing this once per sample period, that means time in samples). But the rate is not constant for the exponential curve—we need to take the log to straighten it (constant acceleration) so we can divide by time. This yields the change in velocity per iteration. But we need to get back to the exponential curve to get our position in time, so the exp. The minus sign is the equivalent of taking the reciprocal so we can use it as a multiplier instead of dividing, which is slower, in the iterative calculation. Whew, glad I could figure that out again.

Is there a way to extend this to work for values moving beyond 0..1 ? I could see it would work very well for portamento, but I havn’t been able to make it work, where we would move from ex. 1k to 2k hz.

Do you mean the overall amplitude? You can multiply and offset the output to whatever range you want. For instance, I usually make all my unit normalize to 1, so my oscillators go from 0..0.5 where 1.0 would be the sample rate. In that case, for a 44.1 kHz sample rate, 1 kHz is 1000 / 44100 (about 0.02268), 2 kHz twice that. So multiplying the output of the ADSR by 0.02268 and adding 0.02268 would sweep the oscillator from 1k to 2k.